DNNL implements some basic capabilities of operation fusion using the post-ops attributes API. The operation fusion typically reduces the memory bandwidth pressure hence leading to higher performance.

The post-ops change the default behavior of a primitive and hence are implemented through the Primitive Attributes mechanism.

Currently the following post-ops are supported by the library:

| Post-ops \ Primitive | Convolution | Inner Product | |

|---|---|---|---|

| Eltwise | Partial | Partial | Partial |

| Sum | Partial | N/A | N/A |

| Depthwise | Partial | N/A | N/A |

Just like Primitive Attributes, the post-ops are represented by an opaque structure (dnnl_post_ops_t in C API and dnnl::post_ops in C++ API) which is copied once it is attached to the attributes using C++ dnnl::primitive_attr::set_post_ops or C dnnl_primitive_attr_set_post_ops functions. These attributes then are passed to a primitive descriptor creation function to take effect. Below is a simple skeleton for C++ API:

- Note

- Different post-ops can be chained together by appending one after another. Note that the appending order matters: the sequence of the post-ops is executed in the order of appearance.

- Warning

- Different primitives have different capabilities on supporting post-ops. Moreover, the support might also depend on the actual implementation of a primitive. For instance, the library generally doesn't support post-ops for reference primitives (which are typically very slow, so there is no point in doing the actual fusion). So the robust integration should handle errors accordingly. See the section on attributes error handling.

The post-op object can be inspected by dnnl::post_ops::kind() function that takes an index of the post-op (that must be less than the value returned by dnnl::post_ops::len()) and returns it's kind.

Supported Post-ops

Eltwise Post-op

The eltwise post-op enables fusing a primitive with a Eltwise primitive. This is probably one of the most popular kinds of fusion: an eltwise (typically an activation function) with preceding convolution or inner product.

The dnnl::primitive::kind of this post-op is dnnl::primitive::kind::eltwise.

API:

The parameters (C++ API for simplicity):

The alg, alpha, and beta parameters are the same as in Eltwise.

The Eltwise post-op replaces:

\[ \dst(:) = \operatorname{Op}(...) \]

with

\[ \dst(:) = scale \cdot \operatorname{Eltwise}( \operatorname{Op}(...) ) \]

The intermediate result of \(\operatorname{Op}(...)\) is not stored. Hence in most of the case this kind of fusion cannot be used with the training.

The \(scale\) factor is supported in INT8 inference only. For other cases the scale must be equal to 1.0.

Sum Post-op

Appends an accumulation (sum) post-op. Prior to accumulating the result, the previous value would be multiplied by scale.

The kind of this post-op is dnnl::primitive::kind::sum.

This feature might improve performance for cases like residual learning blocks, where the result of a convolution is accumulated to the previously computed activations. The scale parameter can be used in INT8 inference only when the result and previous activations have different logical scaling factors.

The sum post-op replaces

\[ \dst(:) = \operatorname{Op}(...) \]

with

\[ \dst(:) = scale \cdot \dst(:) + \operatorname{Op}(...) \]

- Warning

- This post-op (as well as all the others) disregards the original layout of the destination; that is, the layout of the original destination is expected to be the same as the layout of the output destination.

Depthwise Post-op

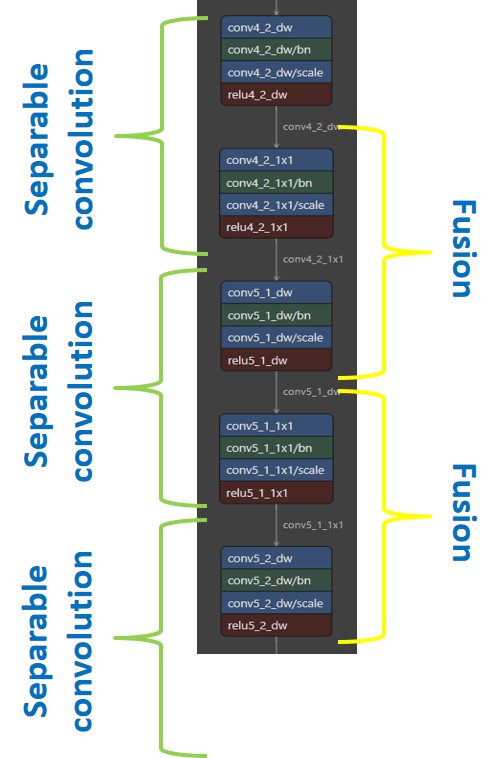

Appends a Depthwise convolution as a post-op. This post-op can only be fused with 1x1 convolution as generally seen in models (like MobileNet_v1) that use a stack of Separable convolutions: Depthwise convolution followed by 1x1 convolution. The stack of these Separable convolutions (like in MobileNet_v1) provide an opportunity to fuse 1x1-Convolution with bandwidth-limited Depthwise convolution.

The dnnl::primitive::kind of this post-op is dnnl::primitive::kind::convolution.

There are two variants of this post-op: dw_k3s1p1 and dw_k3_s2p1 for stride-1 and and stride-2 respectively.

API:

- C: dnnl_post_ops_append_dw_k3s1p1 , dnnl_post_ops_append_dw_k3s2p1

- C++: dnnl::post_ops::append_dw_k3s1p1 , dnnl::post_ops::append_dw_k3s2p1

For better readability, below we assume a 2D convolution and use the following notations:

conv_1x1 Convolution with weights spatial=1 i.e., kh = kw = 1.

conv_dw Depthwise convolution with weights spatial=3 i.e., kh = kw = 3, g = oc = ic and pad_l = pad_r = {1, 1}.

The Depthwise post-op replaces

\[ dst(:) = Conv_{1x1}(...) \]

with

\[ dst(:) = Conv_{dw}(Conv_{1x1}(...)) \]

The final output dimensions of the after post-op is defined as

\[ dst_{conv_dw} = \{ n, oc_{1x1}, \operatorname{ceil}(oh_{conv_{1x1}}/stride), \operatorname{ceil}(ow_{conv_{1x1}}/stride) \} \]

where oh_conv_1x1, ow_conv_1x1 are height and width of conv_1x1 destination.

Supported data types

| conv 1x1 output data type | depthwise post-op output data type | depthwise post-op weights data type | dep |

|---|---|---|---|

| u8, s8 | u8, s8, s32, f32 | s8 | f32, s32 |

| f32 | f32 | f32 | f32 |

| bf16 | bf16, f32 | bf16 | f32, bf16 |

- Note

- Currently only supported for 2D 1x1 convolution.

- Only eltwise post-op can be part of post-op chain (i.e., sum post-op is not supported)

- The

dst_1x1,wei_dwanddst_dware assumed to be dnnl_format_tag_any.

Examples of Chained Post-ops

Different post-ops can be chained together by appending one after another. Note that the order matters: the post-ops are executed in the order they have been appended.

Let's consider some examples.

Sum -> ReLU

This pattern is pretty common for the CNN topologies from the ResNet family.

This will lead to the following primitive behavior:

\[ \dst(:) = \operatorname{ReLU}(\dst(:) + \operatorname{conv}(\src(:), \weights(:)) \]

Tanh -> Sum -> ScaleShift

The hypothetical example to illustrate the sequence of operations applied. We also set all the scales to non-one to as well as use dnnl::primitive_attr::set_output_scales which will be covered in Primitive Attributes: Quantization. Unfortunately (or fortunately) the sequence is not supported by the library and is merely used to illustrate the semantics of post-ops.

This will lead to the following primitive behavior (for better readability the tensors are designated by their names only; i.e., (:) is omitted):

\[ \dst = s_{linear} \cdot ( \alpha \cdot ( s_{sum} \cdot \dst + s_{tanh} \cdot \tanh ( s_{conv} \cdot \operatorname{conv}(\src, \weights) ) ) + \beta ) \]

Relu -> Depthwise -> Relu

An example of fusing depthwise convolution with 1x1 convolution in MobileNet.

This will lead to the following primitive behaviour:

\[ dst = ReLU_{depthwise} ( scales_{depthwise} \cdot ( conv_{depthwise} ( ReLU_{1x1} ( scales_{conv_{1x1}} \cdot ( conv_{1x1}() ) ) ) ) ) \]