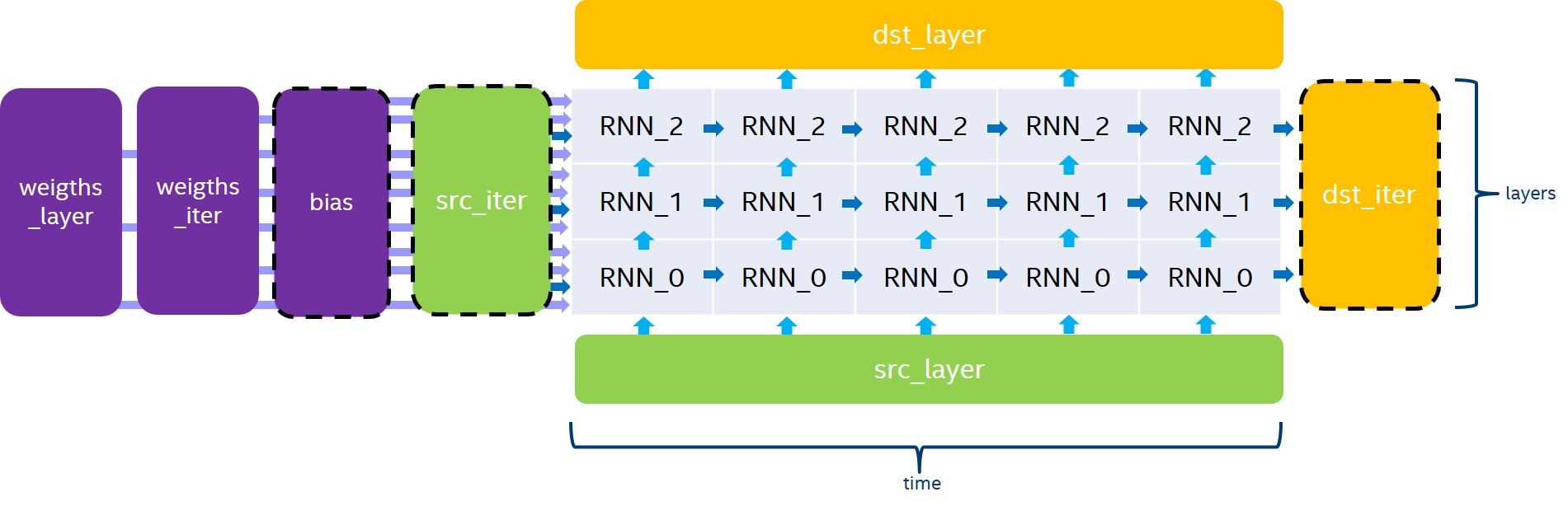

The RNN primitive computes a stack of unrolled recurrent cells, as depicted in Figure 1. bias, src_iter and dst_iter are optional parameters. If not provided, bias and src_iter will default to 0.

The RNN primitive supports four modes for evaluation direction:

- left2right will process the input data timestamps by increasing order

- right2left will process the input data timestamps by decreasing order

- bidirectional_concat will process all the stacked layers from left2right and from right2left independently, and will concatenate the output in dst_layer over the channel dimension.

- bidirectional_sum will process all the stacked layers from left2right and from right2left independently, and will sum the two outputs to dst_layer.

Even though the RNN primitive supports passing a different number of channels for src_layer, src_iter, dst_layer, and dst_iter, we always require the following conditions in order for the dimension to be consistent:

- \(channels(dst\_layer) = channels(dst\_iter)\),

- when \(T > 1\), \(channels(src\_iter) = channels(dst\_iter)\),

- when \(L > 1\), \(channels(src\_layer) = channels(dst\_layer)\),

- when using the

bidirectional_concatdirection, \(channels(dst\_layer) = 2 * channels(dst\_iter)\).

The general formula for the execution of a stack of unrolled recurrent cells depends on the current iteration of the previous layer ( \(h_{t,l-1}\) and \(c_{t,l-1}\)) and the previous iteration of the current layer ( \(h_{t-1, l}\)). Here is the exact equation for non-LSTM cells:

\[ \begin{align} h_{t, l} = Cell(h_{t, l-1}, h_{t-1, l}) \end{align} \]

where \(t,l\) are the indices of the timestamp and the layer of the cell being executed.

And here is the equation for LSTM cells:

\[ \begin{equation*} (h_{t, l},c_{t,l}) = Cell(h_{t, l-1}, h_{t-1, l}, c_{t-1,l}) \end{equation*} \]

where \(t,l\) are the indices of the timestamp and the layer of the cell being executed.

Cell Functions

The RNN API provides five cell functions:

- Vanilla RNN, a single-gate recurrent cell,

- LSTM, a four-gate long short-term memory cell,

- GRU, a three-gate gated recurrent unit cell,

- Linear-before-reset GRU, a three-gate recurrent unit cell with the linear layer before the reset gate.

Vanilla RNN

A single-gate recurrent cell initialized with vanilla_rnn_forward::desc or vanilla_rnn_forward::desc as in the following example.

The Vanilla RNN cell supports the ReLU, Tanh and Sigmoid activation functions. The following equations defines the mathematical operation performed by the Vanilla RNN cell for the forward pass:

\[ \begin{align} a_t &= W \cdot h_{t,l-1} + U \cdot h_{t-1, l} + B \\ h_t &= activation(a_t) \end{align} \]

LSTM

A four-gate long short-term memory recurrent cell initialized with lstm_forward::desc or lstm_backward::desc as in the following example.

Note that for all tensors with a dimension depending on the gates number, we implicitly require the order of these gates to be i, f, \(\tilde c\), and o. The following equation gives the mathematical description of these gates and output for the forward pass:

\[ \begin{align} i_t &= \sigma(W_i \cdot h_{t,l-1} + U_i \cdot h_{t-1, l} + B_i) \\ f_t &= \sigma(W_f \cdot h_{t,l-1} + U_f \cdot h_{t-1, l} + B_f) \\ \tilde c_t &= tanh(W_{\tilde c} \cdot h_{t,l-1} + U_{\tilde c} \cdot h_{t-1, l} + B_{\tilde c}) \\ o_t &= \sigma(W_o \cdot h_{t,l-1} + U_o \cdot h_{t-1, l} + B_o) \\ \\ c_t &= f_t * c_{t-1} + i_t * \tilde c_t \\ h_t &= tanh(c_t) * o_t \end{align} \]

where \(W_*\) are stored in weights_layer, \(U_*\) are stored in weights_iter and \(B_*\) are stored in bias.

- Note

- In order for the dimensions to be consistent, we require \(channels(src\_iter\_c) = channels(dst\_iter\_c) = channels(dst\_iter)\).

GRU

A three-gate gated recurrent unit cell, initialized with gru_forward::desc or gru_backward::desc as in the following example.

Note that for all tensors with a dimension depending on the gates number, we implicitly require the order of these gates to be u, r and \(\tilde{c}\). The following equation gives the mathematical definition of these gates.

\[ \begin{align} u_t &= \sigma(W_u \cdot h_{t,l-1} + U_u \cdot h_{t-1, l} + B_u) \\ r_t &= \sigma(W_r \cdot h_{t,l-1} + U_r \cdot h_{t-1, l} + B_r) \\ \tilde c_t &= tanh(W_o \cdot h_{t,l-1} + U_o \cdot (r * h_{t-1, l}) + B_o) \\ h_t &= u_t * h_{t-1, l} + (1 - u_t) * \tilde c_t \end{align} \]

where \(W_*\) are in weights_layer, \(U_*\) are in weights_iter, and \(B_*\) are stored in bias.

- Note

- If you need to replace u_t by (1-u_t) when computing h_t, you can achieve this by multiplying \(W_u\), \(U_u\) and \(B_u\) by \(-1\). This is possible as \(u_t = \sigma(W_u \cdot h_{t,l-1} + U_u \cdot h_{t-1, l} + B_u)\), and \(1 – \sigma(a) = \sigma(-a)\).

Linear-Before-Reset GRU

A three-gate gated recurrent unit cell with linear layer applied before the reset gate, initialized with or as in the following example.

The following equation describes the mathematical behavior of the Linear-Before-Reset GRU cell.

\[ \begin{align} u &= \sigma(W_u \cdot h_{t,l-1} + U_u \cdot h_{t-1, l} + B_u) \\ r &= \sigma(W_r \cdot h_{t,l-1} + U_r \cdot h_{t-1, l} + B_r) \\ \tilde c &= tanh(W_o \cdot h_{t,l-1} + r *(U_o \cdot h_{t-1, l} + B_{u'}) + B_o) \\ h_t &= u_t * h_{t-1, l} + (1 - u_t) * \tilde c \end{align} \]

Note that for all tensors with a dimension depending on the gates number, except the bias, we implicitly require the order of these gates to be u, r and \(\tilde{c}\). For the bias tensor, we implicitly require the order of the gates to be u, r, \(\tilde{c}\) and ‘u’`.

- Note

- If you need to replace u_t by (1-u_t) when computing h_t, you can achieve this by multiplying \(W_u\), \(U_u\) and \(B_u\) by \(-1\). This is possible as \(u_t = \sigma(W_u \cdot h_{t,l-1} + U_u \cdot h_{t-1, l} + B_u)\), and \(1 – \sigma(a) = \sigma(-a)\).

Data Types

The following table lists the combination of data types supported by the RNN primitive for each input and output memory object.

| Propagation | Input data | Recurrent data | Weights | Bias | Output Data |

|---|---|---|---|---|---|

| Forward / Backward | f32 | f32 | f32 | f32 | f32 |

| Forward | f16 | f16 | f16 | f16 | f16 |

| Forward inference | u8 | u8 | s8 | f32 | u8, f32 |

- Warning

- There might be hardware and/or implementation specific restrictions. Check Implementation Limitations section below.

Data and Weights Formats

In the DNNL programming model, the RNN primitive is one of a few that support the placeholder memory format memory::format::any (shortened to any from now on) and can define data and weight memory objects format based on the primitive parameters.

The following table summarizes the data layouts supported by the RNN primitive.

| Input/Output Data | Recurrent Data | Weights | Bias |

|---|---|---|---|

| any | any | any | ldgo |

| ntc, tnc | ldnc | ldigo, ldgoi | ldgo |

While an RNN primitive can be created with memory formats specified explicitly, the performance is likely to be sub-optimal. When using any it is necessary to first create an RNN primitive descriptor and then query it for the actual data and weight memory objects formats.

- Note

- The RNN primitive supports padded tensors and views. So even if two memory descriptors share the same data layout, they might still be different.

Considerations for Training

When using the RNN API for training, the forward pass should use the forward_training propagation kind, and a workspace should be passed to both the forward pass and the backward pass. Note that after executing the backward pass, the workspace is no more valid and should be populated once again by another forward pass.

Implementation Limitations

- Refer to Data Types for limitations related to data types support.

- GPU

- No support for GRU