To push higher performance during inference computations, recent work has focused on computing at a lower precision (that is, shrinking the size of data for activations and weights) to achieve higher throughput. Eight-bit computations (referred to as int8) offer improved performance over higher-precision types because they enable packing more data into a single instruction, at the cost of reduced (but acceptable) accuracy.

There are different ways to use lower precision to perform inference. The Primitive Attributes: Quantization page describes what kind of quantization model oneDNN supports.

To operate with int8 data types from a higher-precision format (for example, 32-bit floating point), data must first be quantized. The quantization process converts a given input into a lower-precision format. The precision and accuracy factors are determined by the scaling factors.

The data range is usually obtained by sampling the dataset of previous executions in the original data type (for example, the activations and weights from training in f32):

Here \(T\) is a tensor corresponding to either the weights or the activations. Establishing the range of values used in the computation, and selecting a proper scaling factor, prevents over- or underflows during computation of the lower-precision results.

The quantization factor is used to convert the original values into the corresponding int8 range and is calculated as:

The quantized activation, weights, and bias values are calculated as:

Here \( \lceil \rceil \) denotes rounding according to the active rounding mode (typically determined by the MXCSR register; the default value is RoundNearestEven).

When the destination value is stored as a signed 32-bit integer, the result is bound to the same quantization scaling factors:

Here the approximation is used to denote rounding.

The dequantized value is calculated as

To show how the quantization parameters are obtained, suppose we first start with a set of high-precision input and output values. These values come from sampling a previously executed training run and are stored as 32-bit floating point values:

The scaling factors are:

Finally, the quantized input values for the int8 operation are calculated as:

These arrays are the new inputs for the int8 net.

oneDNN supports int8 computations for inference by allowing one to specify that primitives' input and output memory objects use int8 data types. int8 primitive implementations are optimized for high performance on the compatible hardware (see Data Types).

Scaling factors can be configured using primitive attributes. It is also possible to specify fused post-ops. All primitives support the attributes, but not all combinations of parameters are supported. In the case of an unsupported combination, the library returns an error.

In oneDNN, the scaling factor is applied to the output of a primitive. Moreover, to perform input transformations (for example, source, bias, and weights), oneDNN performs quantizing and dequantizing of data for int8 using the reorder primitive.

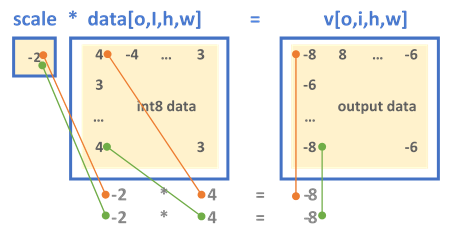

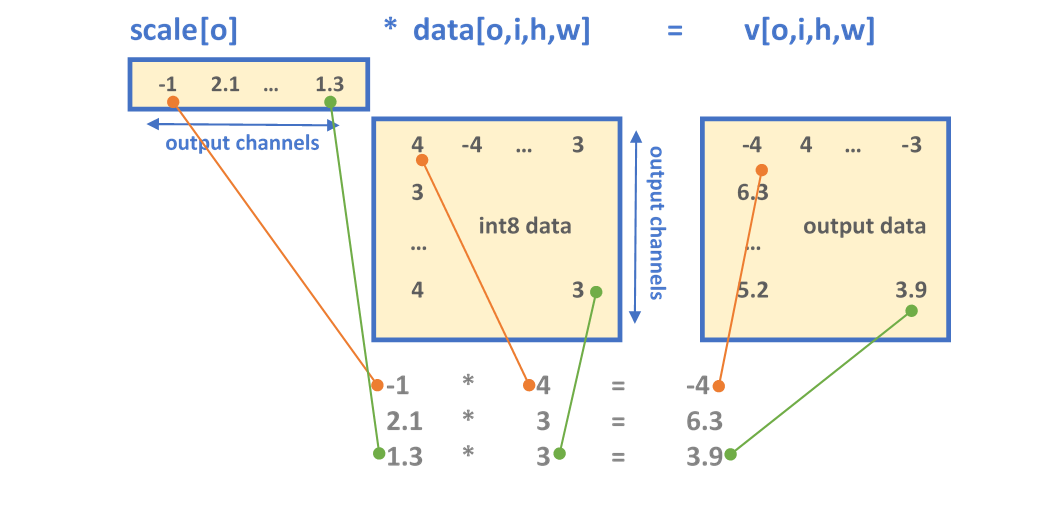

oneDNN has two formats for defining the output scaling factor. Depending on the configuration set by the scaling mask, either the output is scaled uniformly across all the dimensions (mask = 0) or a set of scaling values is applied to specific dimensions, as explained below:

Fused post-ops allow chaining computations. Note that the resulting output value from post-ops is always affected by the scaling factor.

CNN int8 inference example example walks through the steps of int8 inference.