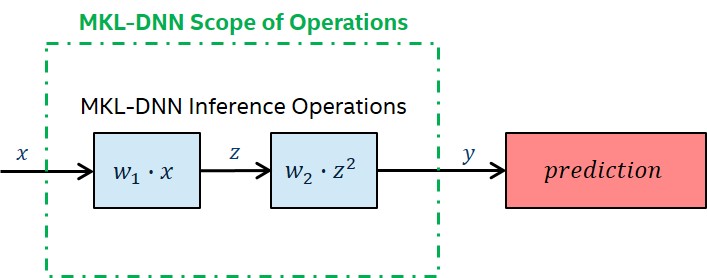

Intel MKL-DNN includes primitives for operations throughout a deep learning network topology. However, it is important to note the scope of Intel MKL-DNN is limited to performance critical functionality and the library does not provide all the functions necessary to implement deep learning workloads, for instance data preprocessing or computing loss function. The soft-max classifier is the sole classifier included, but the application of other classifier types will require user's own implementations. The scope of the library is depicted in the following image:

Best Practices for Inference in Intel MKL-DNN

fp32 Inference

Use Forward Inference Primitives

Intel MKL-DNN provides a forward pass version of each primitive, that avoids storing information required for a backward pass (as in training).

Use the mkldnn::prop_kind::forward_inference argument at creation of the operation descriptor, as in this convolution example:

Layout Propagation

Compute-intensive Intel MKL-DNN primitives execute with highest performance on CPU-friendly data formats. Please see description of data formats here.

Performance gains are maximized by reordering once, and then propagating the CPU-friendly format through as many layers as possible in your topology. Intel MKL-DNN provides the format_tag=any for memory descriptors that will be passed to compute-intensive primitives. The compute-intensive primitive types in Intel MKL-DNN are Convolution, Inner Product, and RNN.

To accomplish this propagation in a robust manner, its is recommended to follow these steps:

A. On compute-intensive operations:

- Pass the

format_tag=anywhen creating Intel MKL-DNN memory descriptor for source, destination, and weights memory - Use these three memory descriptors with 'format _tag=any` to create operation descriptor

- Use operation descriptor to create engine-aware primitive descriptor

- Query the primitive descriptor with

.src_desc()method to get recommended format - Write conditional reorder to execute only if user source data or weights don't match the recommended format

- Create primitive and add it to stream with

primitive.execute(stream, args)

B. On non-intensive operations:

- Query output primitive descriptor with

.dst_desc()from previous operation to find current layout - Pass current layout with

format_tag=.dst_desc()when creating non-intensive operation descriptor - Create primitive and add it to stream with

operation.execute(stream, args)

Now let's take a look at the code syntax to accomplish the compute-intensive steps:

Pass the format_tag=any when creating Intel MKL-DNN memory descriptor for source, destination, and weights memory

Use these three memory descriptors with 'format _tag=any` to create operation descriptor

Use operation descriptor to create engine-aware primitive descriptor

Query the primitive descriptor with .src_desc() method to get recommended format Write conditional reorder to execute only if user source data or weights don't match the recommended format (Note: Do this for weight_memory as well)

Create primitive and add it to stream with primitive.execute(stream, args)

Cache Weights\ Weights are accessed many times during batched inference. At inference time these weights are essentially constants in the mapping function that the network is applying to the input data. As such, the weights should be reordered (if necessary) once and then used in the reorder form for the duration of the execution. This caching causes the computer to use them in a way similar to how a mathematical function applies a constant, i..e, "Grab-and-go" with no overhead for creation or reorder.

Primitive Reuse\ There is JIT compilation overhead associated with primitive creation. It is recommended to reuse any primitive that you can, and only create them once.

Fused Primitives\ Intel MKL-DNN provides fused versions of primitives that attach a non-intensive operation to the end of a compute-intensive operation and then executes both in a single pass, reducing the number of memory accesses needed for the combined operations. The non-intensive operation is added as a post-op attribute to the compute intensive primitive descriptor. Please note that post-ops do not change the number of inputs or outputs of the primitives. Please see the "Post-ops and Attributes" section of the doc for each primitive type in /docs/primitive/ for a list of available fused primitives.

A good example is adding ReLU as a post-op to convolution, which we will use as a demonstration below. The steps are

- Create a

post_opfor fused ReLU - Create primitive attribute and add the

post_op - Create a convolution descriptor

- Create a convolution primitive descriptor, passing

post_op asan arg

Create a post_op for fused ReLU

Create primitive attribute and add the post_op

Create a convolution descriptor

Create a convolution primitive descriptor, passing the post-op infused attrs as an arg

int8 Inference

Intel MKL-DNN supports low precision int8 for inference execution. Note that not all primitives have int8 versions. Sometimes the speed benefits would be minimal, or the loss in accuracy is not acceptable. Also the soft-max classifier only supports fp32, so int8 inference will require a reorder before executing this primitive.

By default, the Intel MKL-DNN reorder primitive does not scale upon casting to int8. In order to compress fp32 data to int8 precision while still preserving the entire shape of the distribution, a process called quantization must applied. Quantization will scale the data based on its range to efficiently fill the bits available for int8 type.

To achieve quantization upon casting, the user must provide a few inputs to Intel MKL-DNN in order to use int8 inference:

- Specify data type at creation of primitive descriptor (int8 in this case)

- Provide a scaling factor for Intel MKL-DNN reorder primitive

- Provide an output scaling factor the operation primitive

Please see the dedicated section on low precision computations in Intel MKL-DNN for a detailed discussion, including how to calculate the scaling factors.